The Token Standard

the AI Economy Runs on Three Conversions

Most coverage of artificial intelligence still treats the field as a capability race. Each new benchmark, each leaked frontier model, each rumored training run becomes a headline. The more consequential story, however, is no longer at the frontier. It is in the plumbing.

The center of gravity in AI is shifting from model capability to applied economics. The decisive question of the next decade is not which lab releases the smartest system. It is who can transform raw electricity into useful intelligence at the lowest cost, with the highest reliability, at planetary scale. That is a question of infrastructure, geography, and policy, far more than it is a question of research.

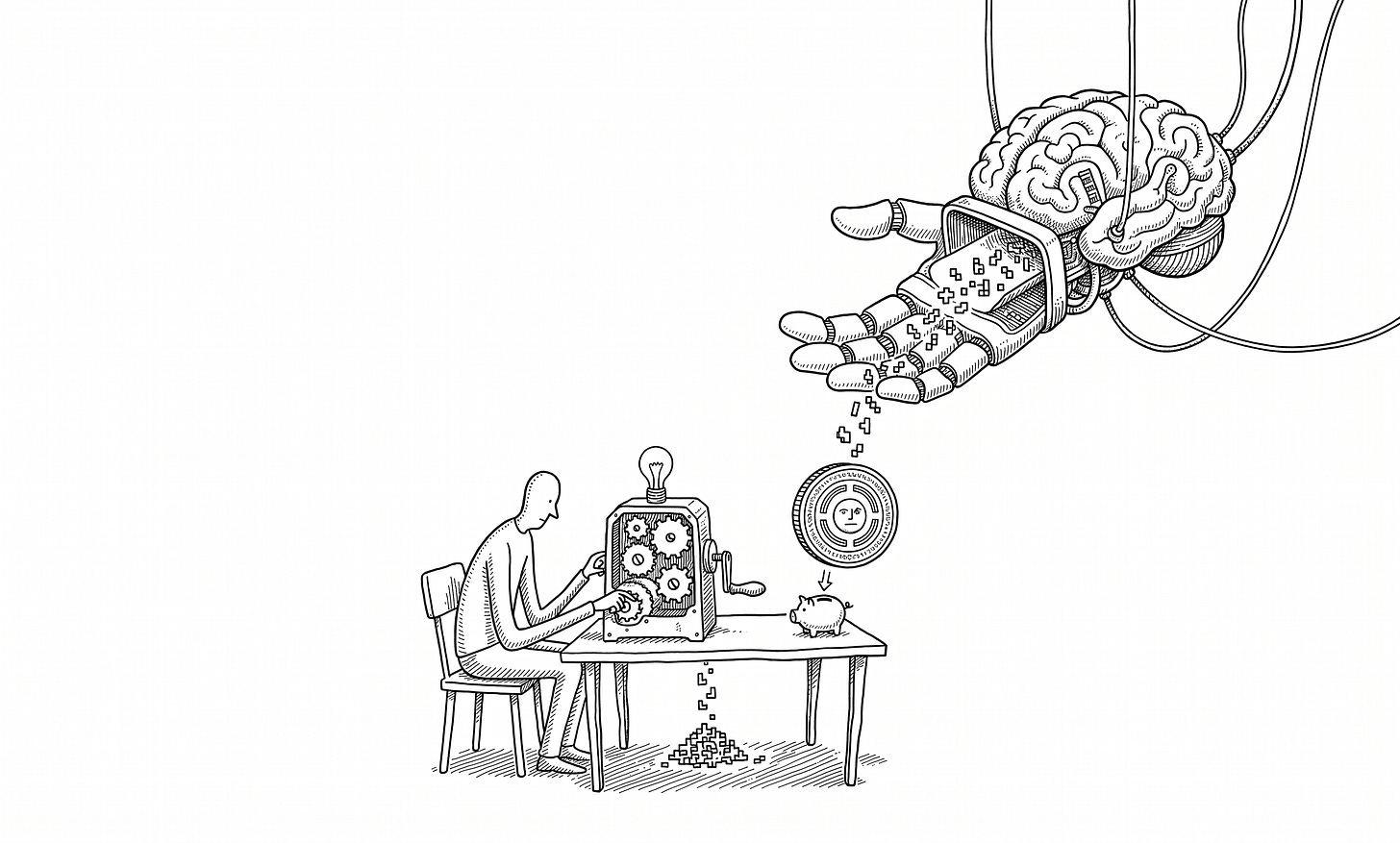

A useful way to see this is to think of the AI economy as a three-stage conversion chain. At one end sits the electron, the cheapest input modern society produces. In the middle sits the token, the unit of account for everything large language models do. At the other end sits productivity, the actual economic output that users and enterprises pay for. The full chain is electricity → tokens → productivity.

Each stage has its own economics, its own bottlenecks, and increasingly its own geopolitics. The cost of converting electricity to tokens depends on chips, algorithms, and data center design. The cost of converting tokens to productivity depends on model quality, prompt design, and human judgment. The two conversions are governed by entirely different forces. Treating them as one continuous “AI cost” obscures more than it reveals.

This matters because the last decade of AI commentary has been dominated by a single mental model: scale wins. Add more parameters, more data, more compute, and capability follows. That model is not wrong, but it is incomplete. It explains how the field moved from GPT-2 to GPT-4. It does not explain what happens next.

What happens next is determined by the slope of three different cost curves: the cost of electricity, the cost of compute, and the cost of converting both into usable intelligence. Each curve has a different shape in different countries. Each is shaped by industrial policy choices made years or decades ago. And each creates a different kind of competitive advantage.

For a builder, this reframe has practical consequences. The choice of where to deploy, which model tier to buy from, and how to structure a workflow is no longer a purely technical decision. It is a bet on which conversion stage will compress fastest, in which jurisdiction. For a policymaker, it means that the AI race is not won at the chip foundry alone. It is also won, perhaps more decisively, at the substation.

The rest of this essay walks through the three stages in turn. It starts with electricity, where the most underappreciated story is unfolding in China. It moves to the electricity-to-token conversion, where the contest between hardware and algorithms is reshaping global cost structures. It ends with the tokens-to-productivity stage, where the pricing architecture of the AI economy is quietly being set in stone.

The Power Floor

In early March 2026, President Trump met with the heads of Amazon, Google, Microsoft, OpenAI, and three other technology companies. The meeting had a single agenda item: any new AI data center built in the United States would have to source its own power. Public grid capacity could not be drawn down for private model training.

That meeting captured something important. The constraint on American AI is no longer talent, capital, or even chips. It is electrons. AI workloads are projected to consume a rising share of US grid capacity over the next several years, and the grid is not expanding fast enough to absorb them. The result is a slow, structural ceiling on how cheaply American AI can be produced.

The same story is playing out across most advanced economies. German power prices spiked to a yearly high. India is rationing electricity to industry. Spain and Portugal recently lived through the largest blackout in their modern history. The energy crunch is not a temporary supply shock. It is the predictable consequence of treating electricity as a market commodity in countries that did not invest enough in long-cycle infrastructure.

Then there is China. In 2025, total Chinese electricity consumption crossed 10 trillion kilowatt-hours, a threshold no country had previously reached. That figure is more than double American consumption. It exceeds the combined annual usage of the European Union, Russia, India, and Japan. The Financial Times described the moment as the arrival of “the first electricity empire in human history.”

This did not happen by accident. From the founding of the People’s Republic, electricity was treated not as a commodity but as public infrastructure, on the same legal footing as roads and water. That framing produced seventy years of consistent state-led investment, regardless of the political and economic cycle. The Three Gorges Dam, an idea first sketched by Sun Yat-sen in 1919, eventually became operational. The West-to-East Power Transmission Project, which moves electricity from the energy-rich interior to the demand-rich coast, became one of the largest infrastructure systems on earth.

The Three Gorges Project is one of the superprojects with the most significant overall benefits